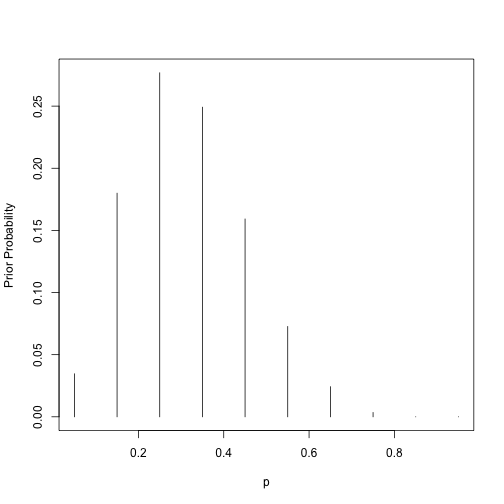

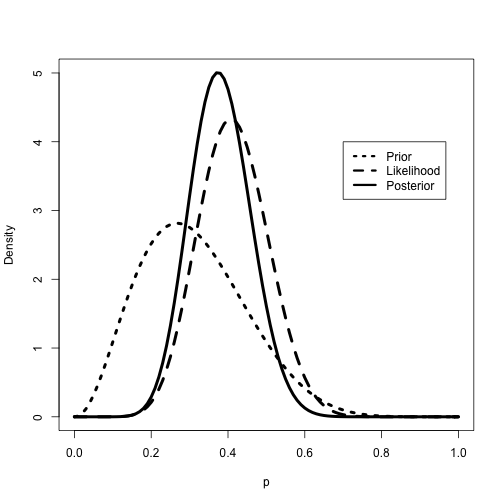

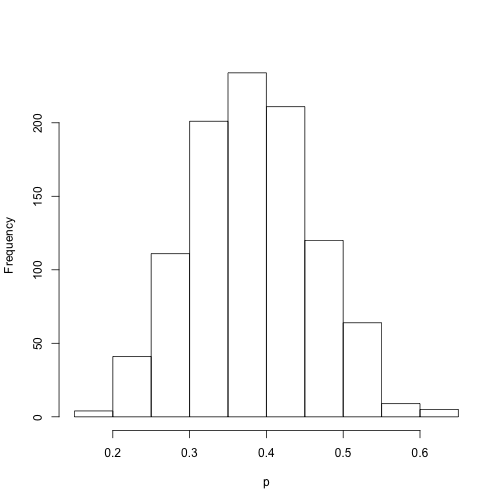

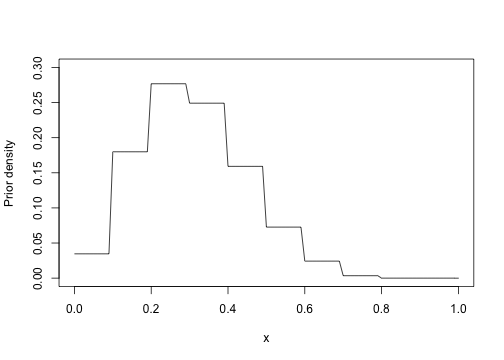

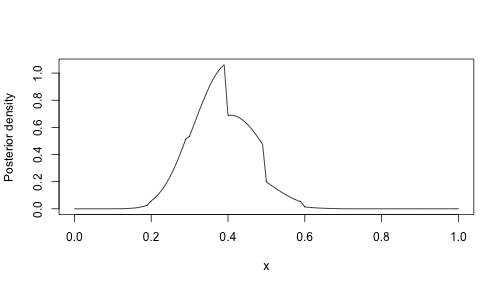

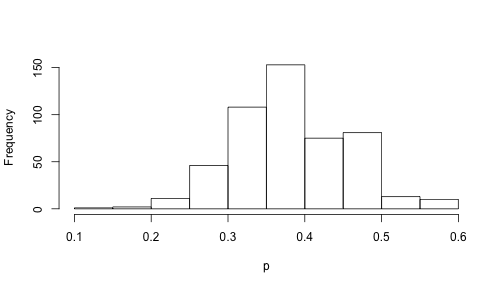

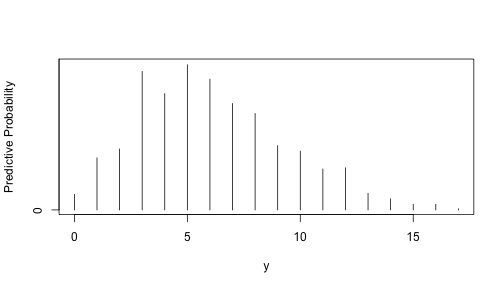

class: left, bottom, inverse, title-slide # Bayesian Statistics ## Lecture 2: Introduction to Bayesian Thinking ### Yanfei Kang ### 2019/09/03 (updated: 2019-09-16) --- # Introduction - We will consider a basic problem of learning about a population proportion. - Remind you our Bayesian inference idea: `$$\text{prior} + \text{data} \rightarrow \text{posterior}.$$` - We will illustrate the use of different functional forms for the **prior**. - From the posterior, one summarizes the probability distribution to perform **inferences** and one can also **predict** the likely outcomes of a new sample. --- class: inverse, center, middle # Example --- # Learning About the Proportion of Heavy Sleepers - Suppose a person is interested in learning about the sleeping habits of American college students. - She hears that doctors recommend eight hours of sleep for an average adult. - **What proportion of college students get at least eight hours of sleep?** --- # Learning About the Proportion of Heavy Sleepers Now we reorganize the problem. 1. **Population: ** all American college students. 2. `\(p\)`: the proportion of this population who sleep at least eight hours. 3. Our aim is to learn about the location of `\(p\)`, which is the unknown parameter. --- # Bayesian viewpoint - Use **probability distribution** to describe a person’s beliefs about the uncertainty in `\(p\)`. - This distribution reflects the person’s **subjective prior** opinion about `\(p\)`. - A random sample will be taken. - Before that, research on the sleeping habits to build a prior distribution (e.g. from internet articles or newspaper). - Prior beliefs: `\(p < 0.5\)`, can be any value between 0 and 0.5. --- # Bayesian viewpoint - Suppose our prior density for `\(p\)` is `\(g(p)\)`. - Regard "success" as "sleeping at least 8 hours". - We take a random sample with `\(s\)` successes and `\(f\)` failures. -- - Now we have the likelihood function `$$L(p) \propto p^{s}(1-p)^{f}, 0<p<1.$$` - The posterior for `\(p\)` can be obtained by Bayes' rule: `$$g(p|\text{data}) \propto g(p)L(p).$$` - Let's try three different choices of the prior density. --- class: inverse, center, middle # Using a Discrete Prior --- # Discrete Prior - List all possible values for `\(p\)` and assign weights for them. - `\(p\)` could be 0.05, 0.15, 0.25, 0.35, 0.45, 0.55, 0.65, 0.75, 0.85, 0.95. - Their weights are 1, 5.2, 8, 7.2, 4.6, 2.1, 0.7, 0.1, 0, 0. --- # Discrete Prior .pull-left[ ```r p = seq(0.05, 0.95, by = 0.1) prior = c(1, 5.2, 8, 7.2, 4.6, 2.1, 0.7, 0.1, 0, 0) prior = prior/sum(prior) plot(p, prior, type = "h", ylab="Prior Probability") ``` ] .pull-right[ <!-- --> ] --- # Likelihood - In our example, 11 of 27 students sleep a sufficient number of hours. - `\(s = 11\)` and `\(f = 16.\)` - Likelihood function: `$$L(p) \propto p^{11}(1-p)^{16}.$$` --- # Posterior .pull-left[ In R, `pdisc()` from the **LearnBayes** package computes the posterior probabilities. ```r library(LearnBayes); library(lattice) data = c(11, 16) post = pdisc(p, prior, data) # round(cbind(p, prior, post),2) PRIOR=data.frame("prior",p,prior) POST=data.frame("posterior",p,post) names(PRIOR)=c("Type","P","Probability") names(POST)=c("Type","P","Probability") data=rbind(PRIOR,POST) xyplot(Probability~P|Type,data=data,layout=c(1,2), type="h",lwd=3,col="black") ``` ] .pull-right[ <!-- --> ] --- class: inverse, center, middle # Using a Beta Prior --- # Beta Prior - `\(p\)` is a continuous parameter. - Alternative is to construct a density `\(g(p)\)` on [0, 1]. - Suppose she believes that the proportion is equally likely to be smaller or larger than `\(p = 0.3\)`. - And she is 90% confident that `\(p\)` is less than 0.5. - A convenient family of densities for a proportion is `$$g(p) \propto p^{a-1}(1-p)^{b-1}, 0<p<1.$$` - **How to select `\(a\)` and `\(b\)`?** --- # Beta Prior - Here the person believes that the median and 90th percentiles of the proportion are given, respectively, by 0.3 and 0.5. - ` beta.select()` in R can find the shape parameters of the beta density that match this prior knowledge. ```r quantile2=list(p=.9,x=.5) quantile1=list(p=.5,x=.3) beta.select(quantile1,quantile2) ``` ``` ## [1] 3.26 7.19 ``` --- # Posterior - Combining this beta prior with the likelihood function, one can show that the posterior density is also of the beta form with updated parameters `\(a + s\)` and `\(b + f\)`. `$$g(p | \text { data }) \propto p^{a+s-1}(1-p)^{b+f-1}, 0<p<1.$$` - This is an example of a **conjugate** analysis, where the prior and posterior densities have the same functional form. - the prior, likelihood, and posterior are all in the beta family. --- # Posterior .pull-left[ ```r # Plot beta prior, likelihood and posterior a = 3.26; b = 7.19; s = 11; f = 16 *curve(dbeta(x,a+s,b+f), from=0, to=1, * xlab="p",ylab="Density",lty=1,lwd=4) curve(dbeta(x,s+1,f+1),add=TRUE,lty=2,lwd=4) curve(dbeta(x,a,b),add=TRUE,lty=3,lwd=4) legend(.7,4,c("Prior","Likelihood","Posterior"), lty=c(3,2,1),lwd=c(3,3,3)) ``` ] .pull-right[ <!-- --> ] --- # Making Inferences via Posterior **1. Is it likely that the proportion of heavy sleepers is greater than 0.5?** **2. What is the 90% interval estimate for `\(p\)`?** ??? ```r # Exact inferences 1 - pbeta(0.5, a + s, b + f) ``` ``` ## [1] 0.0690226 ``` ```r qbeta(c(0.05, 0.95), a + s, b + f) ``` ``` ## [1] 0.2555267 0.5133608 ``` ```r # Inferences based on simulation ps = rbeta(1000, a + s, b + f) hist(ps,xlab="p",main="") ``` <!-- --> ```r sum(ps >= 0.5)/1000 ``` ``` ## [1] 0.061 ``` ```r quantile(ps, c(0.05, 0.95)) ``` ``` ## 5% 95% ## 0.2628391 0.5088270 ``` --- class: inverse, center, middle # Using a Histogram Prior --- # Posterior Computation ### A "brute-force" method of summarizing posterior computations for an prior `\(g(p)\)`. 1. Choose a grid of values of `\(p\)` over an interval that covers the posterior density. 2. Compute the product of the likelihood `\(L(p)\)` and the prior `\(g(p)\)` on the grid. 3. Normalize by dividing each product by the sum of the products. In this step, we are approximating the posterior density by a discrete probability distribution on the grid. 4. Take a random sample with replacement from the discrete distribution. --- # Histogram Prior Convenient to state the prior beliefs about `\(p\)` by dividing it into ten subintervals (0, 0.1), (0.1, 0.2), ..., (0.9, 1), and assign probabilities for them. .pull-left[ ```r midpt = seq(0.05, 0.95, by = 0.1) prior = c(1, 5.2, 8, 7.2, 4.6, 2.1, 0.7, 0.1, 0, 0) prior = prior/sum(prior) curve(histprior(x,midpt,prior), from=0, to=1, ylab="Prior density",ylim=c(0,.3)) ``` ] .pull-right[ <!-- --> ] --- # Posterior .pull-left[ ```r curve(histprior(x,midpt,prior) * dbeta(x,s+1,f+1), from=0, to=1, ylab="Posterior density") ``` <!-- --> ] .pull-right[ ```r p = seq(0, 1, length=500) post = histprior(p, midpt, prior) * dbeta(p, s+1, f+1) post = post/sum(post) ps = sample(p, replace = TRUE, prob = post) hist(ps, xlab="p", main="") ``` <!-- --> ] --- class: inverse, center, middle # Prediction --- # Prediction - Learnt about the population proportion of heavy sleepers `\(p\)`. - **What is the number of heavy sleepers `\(\tilde{y}\)` in a future sample of `\(m = 20\)` students?** - If the current beliefs about `\(p\)` are contained in the density `\(g(p)\)`, then the predictive density of `\(\tilde{y}\)` is given by `$$f(\tilde{y})=\int f(\tilde{y} | p) g(p) d p.$$` - If `\(g\)` is a prior density, then we refer to this as the **prior predictive density**. - If `\(g\)` is a posterior, then `\(f\)` is a **posterior predictive density**. --- # Predictive Density with Discrete Prior - Let `\(f_B(y|n,p)\)` denote the binomial sampling density given values of the sample size `\(n\)` and proportion `\(p\)`: `$$f_{B}(y | n, p)=\left(\begin{array}{l}{n} \\ {y}\end{array}\right) p^{y}(1-p)^{n-y}, y=0, \ldots, n.$$` - Then the predictive probability of `\(\tilde{y}\)` successes in a future sample of size `\(m\)` is given by `$$f(\tilde{y})=\sum f_{B}\left(\tilde{y} | m, p_{i}\right) g\left(p_{i}\right).$$` --- # Predictive Density with Discrete Prior **What is the number of heavy sleepers `\(\tilde{y}\)` in a future sample of `\(m = 20\)` students?** ```r p=seq(0.05, 0.95, by=.1) prior = c(1, 5.2, 8, 7.2, 4.6, 2.1, 0.7, 0.1, 0, 0) prior=prior/sum(prior) m=20; ys=0:20 pred=pdiscp(p, prior, m, ys) round(cbind(0:20,pred),3) ``` ``` ## pred ## [1,] 0 0.020 ## [2,] 1 0.044 ## [3,] 2 0.069 ## [4,] 3 0.092 ## [5,] 4 0.106 ## [6,] 5 0.112 ## [7,] 6 0.110 ## [8,] 7 0.102 ## [9,] 8 0.089 ## [10,] 9 0.074 ## [11,] 10 0.059 ## [12,] 11 0.044 ## [13,] 12 0.031 ## [14,] 13 0.021 ## [15,] 14 0.013 ## [16,] 15 0.007 ## [17,] 16 0.004 ## [18,] 17 0.002 ## [19,] 18 0.001 ## [20,] 19 0.000 ## [21,] 20 0.000 ``` --- # Predictive Density with Beta Prior - Suppose instead that we model our beliefs about `\(p\)` using a `\(\text{beta}(a, b)\)` prior. - We can analytically integrate out `\(p\)` to get a closed-form expression for the predictive density: `$$\begin{aligned} f(\tilde{y}) &=\int f_{B}(\tilde{y} | m, p) g(p) d p \\ &=\left(\begin{array}{c}{m} \\ {\tilde{y}}\end{array}\right) \frac{B(a+\tilde{y}, b+m-\tilde{y})}{B(a, b)}, \tilde{y}=0, \ldots, m \end{aligned}$$` --- # Predictive Density with Beta Prior **What is the number of heavy sleepers `\(\tilde{y}\)` in a future sample of `\(m = 20\)` students?** ```r ab=c(3.26, 7.19) m=20; ys=0:20 pred=pbetap(ab, m, ys) pred ``` ``` ## [1] 1.812205e-02 4.511485e-02 7.248106e-02 9.456396e-02 1.084896e-01 ## [6] 1.135841e-01 1.106895e-01 1.015339e-01 8.821389e-02 7.280839e-02 ## [11] 5.712007e-02 4.252974e-02 2.994441e-02 1.981684e-02 1.221463e-02 ## [16] 6.917948e-03 3.527752e-03 1.568885e-03 5.764529e-04 1.575142e-04 ## [21] 2.438293e-05 ``` --- # Predictive Density with Beta Prior - Bear in mind that one convenient way of computing a predictive density for *any* prior is by **simulation**. - To obtain `\(\tilde{y}\)`, we first simulate, say, `\(p^∗\)` from `\(g(p)\)`, and then simulate `\(\tilde{y}\)` from the binomial distribution `\(f_B(\tilde{y}|p)\)`. .pull-left[ ```r p=rbeta(1000, 3.26, 7.19) y = rbinom(1000, 20, p) freq=table(y) ys=as.integer(names(freq)) predprob=freq/sum(freq) plot(ys,predprob,type="h",xlab="y",ylab="Predictive Probability") ``` ] .pull-right[ <!-- --> ] --- # Predictive Density with Beta Prior - The probability that `\(\tilde{y}\)` falls in the interval {1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11} is 91.8%. - The probability this sample proportion falls in the interval [1/20, 11/20] is 91.8%. - Compare with the 91.8% probability interval for the population proportion p. - In predicting a future sample proportion, there are **two sources of uncertainty**: 1. the uncertainty in the value of `\(p\)`. 2. the uncertainty in the binomial uncertainty in the value of `\(\tilde{y}\)`. --- class: inverse, center, middle # Summary --- # Summary - Different priors. - Posterior distribution. - Inference. - Prediction.