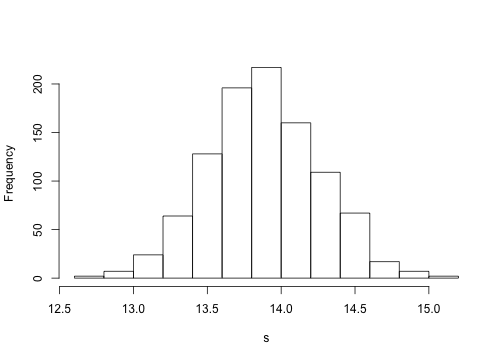

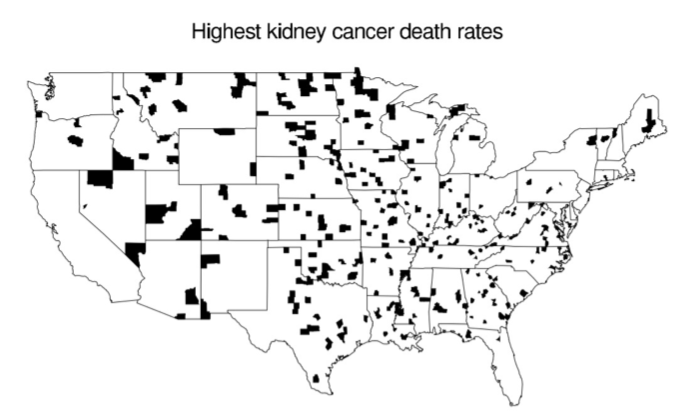

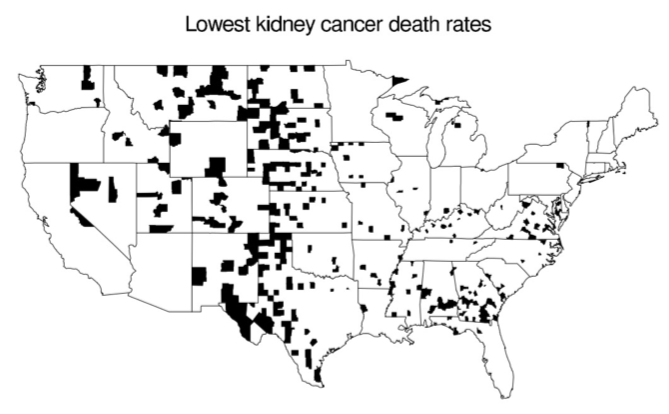

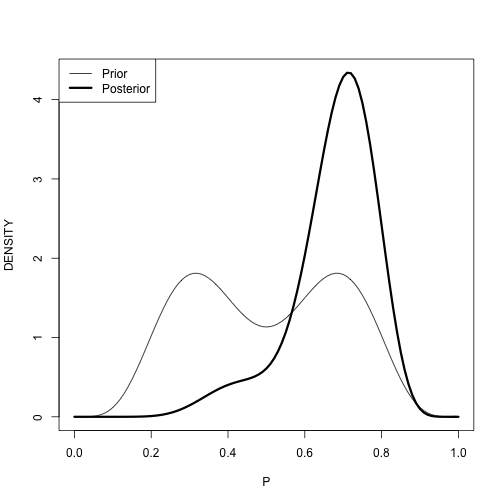

class: left, bottom, inverse, title-slide # Bayesian Statistics ## Lecture 3: Single-Parameter Models ### Yanfei Kang ### 2019/09/08 (updated: 2019-09-30) --- # Introduction - We will see several single-parameter models. - Bayesian inference for a variance for a normal population and inference for a Poisson mean when informative prior information is available. - One may have limited beliefs about a parameter. - Inferences may or may not be sensitive to the choice of prior. --- class: inverse, center, middle # Normal Distribution with Known Mean but Unknown Variance --- # Normal Distribution with Known Mean but Unknown Variance - We estimate an unknown variance using American football scores. - The focus is on the difference `\(d\)` between a game outcome (winning score minus losing score) and a published point spread. - We have observations: `\(d_1, d_2, \cdots, d_n\)`. - We assume they are normally distributed with mean 0 and variance `\(\sigma^2\)`. --- # ### Likelihood function `$$L\left(\sigma^{2}\right) \propto\left(\sigma^{2}\right)^{-n / 2} \exp \left\{-\sum_{i=1}^{n} d_{i}^{2} /\left(2 \sigma^{2}\right)\right\}, \sigma^{2}>0.$$` -- ### Prior We use the noninformative prior `$$p\left(\sigma^{2}\right) \propto 1 / \sigma^{2}.$$` -- ### Posterior `$$g\left(\sigma^{2} | \text { data }\right) \propto\left(\sigma^{2}\right)^{-n / 2-1} \exp \left\{-v /\left(2 \sigma^{2}\right)\right\},$$` where `\(v=\sum_{i=1}^{n} d_{i}^{2}.\)` --- # Now if we define precision parameter `\(P=1 / \sigma^{2},\)` 1. **What is the distribution of `\(P\)`?** 2. **What is the point estimate and a 95% probability interval for the standard deviation `\(\sigma\)`?** ??? - If `\(X\)` is distributed with density `\(f(x)\)`, then the density of `\(y = 1/x\)` is `\(g(y)=\frac{1}{y^{2}} f\left(\frac{1}{y}\right)\)`. - `\(P\)` is distributed as `\(U/v\)`. - `\(U\)` has a `\(\chi^2\)` distribution with `\(n\)` degrees of freedom. --- # Normal Dis. with Known Mean but Unknown Variance .pull-left[ ```r library(LearnBayes); data(footballscores); attach(footballscores) d = favorite - underdog - spread n = length(d); v = sum(d^2) # simulate from posterior *P = rchisq(1000, n)/v s = sqrt(1/P) quantile(s, probs = c(0.025, 0.5, 0.975)) hist(s,main="") ``` ] .pull-right[ ``` ## 2.5% 50% 97.5% ## 13.16735 13.85951 14.60327 ``` <!-- --> ] --- class: inverse, center, middle # Informative Prior for Cancer Rates --- # A Puzzling Pattern in a Map Let's look at the counties in the United States with the highest and lowest kidney cancer death rates during the 1980s (raw data is available [here](http://www.stat.columbia.edu/~gelman/book/data/cancer/)). .pull-left[ ] -- .pull-right[ ] -- **What patterns do you notice?** --- # Bayesian Inference for the Cancer Death Rate - The misleading patterns in the maps of raw rates suggest that a model-based approach to estimating the true underlying rates might be helpful. - In particular, it is natural to estimate the underlying cancer death rate in each county `\(j\)` using the model `$$y_{j} \sim \operatorname{Poisson}\left(10 n_{j} \theta_{j}\right),$$` where `\(y_j\)` is the number of kidney cancer deaths in county `\(j\)`, `\(n_j\)` is the population of the county, and `\(\theta_j\)` is the underlying rate in units of deaths per person per year. - Were we performing inference for just one of the counties, we would simply write `\(y \sim \operatorname{Poisson}(10 n \theta).\)` --- # Prior - A gamma distribution with parameters `\(\alpha = 20\)` and `\(\beta = 430,000\)` is a reasonable prior distribution for underlying kidney cancer death rates. <img src="gamma.png" width="600" style="display: block; margin: auto;" /> - This prior distribution has a mean of `\(\frac{\alpha}{\beta}=4.65 \times 10^{-5}\)` and standard deviation `\(\frac{\sqrt{\alpha}}{\beta}=1.04 \times 10^{-5}.\)` --- # Posterior - The posterior distribution of `\(θ_j\)` is then, `$$\theta_{j} | y_{j} \sim \operatorname{Gamma}\left(20+y_{j}, 430,000+10 n_{j}\right),$$` which has mean and variance, `$$\begin{aligned} \mathrm{E}\left(\theta_{j} | y_{j}\right) &=\frac{20+y_{j}}{430,000+10 n_{j}} \\ \operatorname{var}\left(\theta_{j} | y_{j}\right) &=\frac{20+y_{j}}{\left(430,000+10 n_{j}\right)^{2}} \end{aligned}$$` --- # Inference for a Small County: `\(n_j = 1000\)` - For this county, if `\(y_j = 0\)`, then the raw death rate is 0 but the posterior mean is `\(\frac{20}{440,000}=4.55 \times 10^{-5}.\)` - If `\(y_j = 1\)`, then the raw death rate is 1 per 1000 per 10 years, or `\(10^{−4}\)` per person-year (about twice as high as the national mean), but the posterior mean is only `\(\frac{21}{440,000}=4.77 \times 10^{-5}.\)` - If `\(y_j = 2\)`, then the raw death rate is an extremely high `\(2 \times 10^{−4}\)` per person-year, but `\(4.77 \times 10^{−5}\)`. The posterior mean is still only `\(\frac{22}{440,000}=5.00 \times 10^{-5}.\)` **With such a small population size, the data are dominated by the prior distribution.** --- # Inference for a Small County: `\(n_j = 1000\)` **But how likely, a priori, is it that `\(y_j\)` will equal 0, 1, 2, and so forth, for this county with `\(n_j = 1000?\)`** - We need to figure out the predictive distribution of `\(y_j\)`. - The Poisson model with gamma prior distribution has a negative binomial predictive distribution: `$$y_{j} \sim \operatorname{Neg}-\operatorname{bin}\left(\alpha, \frac{\beta}{10 n_{j}}\right).$$` - Other ways? --- # Inference for a Large County: `\(n_j = 1~\text{million}\)` **How many cancer deaths `\(y_j\)` might we expect to see in a ten-year period?** -- - Assume we found a median of 473 and a 50% interval of [393,545]. The raw death rate in such a county is then as likely or not to fall between `\(3.93 \times 10^{−5}\)` and `\(5.45 \times 10^{−5}\)`. - Bayesianly estimated or ‘Bayes-adjusted’ death rate? - if `\(y_j\)` takes on the low value of 393, then the raw death rate is `\(3.93 \times 10^{−5}\)` and the posterior mean is `\(\theta_j\)` is `\(\frac{20+393}{10^{7}+430.000}=3.96 \times 10^{-5}.\)` - if `\(y_j\)` takes on the low value of 545, then the raw death rate is `\(5.45 \times 10^{−5}\)` and the posterior mean is `\(5.41 \times 10^{-5}.\)` -- - **In this large county, the data dominate the prior distribution.** --- # A General Feature of Bayesian Inference - The posterior distribution is centered at a point that represents a compromise between the prior information and the data - The compromise is controlled to a greater extent by the data as the sample size increases. --- # Comparing Counties of Different Sizes .pull-left[ <img src="cancer1.png" width="600" style="display: block; margin: auto;" /><img src="cancer2.png" width="600" style="display: block; margin: auto;" /> ] .pull-right[ 1. Kidney cancer death rates v.s. the population size. 2. Replotted on the scale of `\(log_{10}\)` population to see the data more clearly. 3. Bayes-estimated posterior mean kidney cancer death rates 4. Posterior medians and 50% intervals for `\(\theta_j\)` for a sample of 100 counties `\(j\)`. ] --- class: inverse, center, middle # Mixtures of Conjugate Priors --- # Probability of a biased coin landing head - If `\(p\)` represents the probability that the coin lands heads. - Either `\(p\)` is in the neighborhood of 0.3 or in the neighborhood of 0.7. - It is equally likely that `\(p\)` is in one of the two neighborhoods. -- ### Prior .pull-left[This belief can be modeled using the prior density `$$g(p)=\gamma g_{1}(p)+(1-\gamma) g_{2}(p),$$` where `\(g_1\)` is beta(6, 14), `\(g_2\)` is beta(14, 6), and the mixing probability is `\(\gamma = 0.5\)`.] .pull-right[ <img src="betamix.png" width="300" style="display: block; margin: auto;" /> ] --- # Posterior Suppose we flip the coin `\(n\)` times, obtaining `\(s\)` heads and `\(f = n − s\)` tails. The posterior is `$$g(p | \text { data })=\gamma(\text { data }) g_{1}(p | \text { data })+(1-\gamma(\text { data })) g_{2}(p | \text { data }),$$` where `\(g_1\)` is beta(6 + `\(s\)`, 14 + `\(f\)`), `\(g_2\)` is beta(14 + `\(s\)`, 6 + `\(f\)`), and the mixing probability `\(\gamma(\text { data })\)` has the form `$$\gamma(\text { data })=\frac{\gamma f_{1}(s, f)}{\gamma f_{1}(s, f)+(1-\gamma) f_{2}(s, f)},$$` where `\(f_j(s,f)\)` is the prior predictive probability of `\(s\)` heads in `\(n\)` flips when `\(p\)` has the prior density `\(g_j\)`. --- # Posterior in R ```r probs=c(.5,.5) beta.par1=c(6, 14) beta.par2=c(14, 6) betapar=rbind(beta.par1, beta.par2) data=c(7,3) post=binomial.beta.mix(probs,betapar,data) post ``` ``` ## $probs ## beta.par1 beta.par2 ## 0.09269663 0.90730337 ## ## $betapar ## [,1] [,2] ## beta.par1 13 17 ## beta.par2 21 9 ``` --- # The prior and posterior densities .pull-left[ ```r curve(post$probs[1]*dbeta(x,13,17)+post$probs[2]*dbeta(x,21,9), from=0, to=1, lwd=3, xlab="P", ylab="DENSITY") curve(.5*dbeta(x,6,12)+.5*dbeta(x,12,6),0,1,add=TRUE) legend("topleft",legend=c("Prior","Posterior"),lwd=c(1,3)) ``` Your findings? ] .pull-right[ <!-- --> ] --- class: inverse, center, middle # Summary --- # Summary - Single-parameter models. - Bayesian inference for a variance for a normal population and inference for a Poisson mean when informative prior information is available. - One may have limited beliefs about a parameter. - Mixtures.