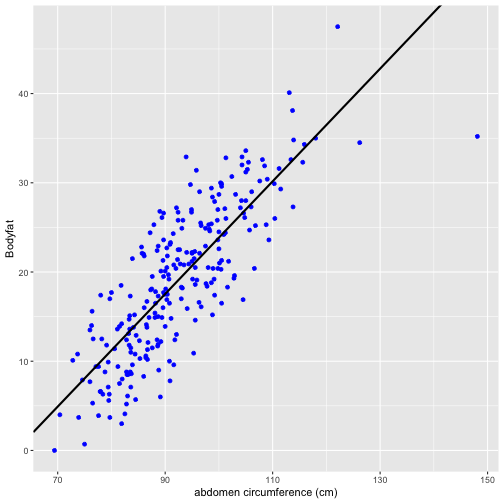

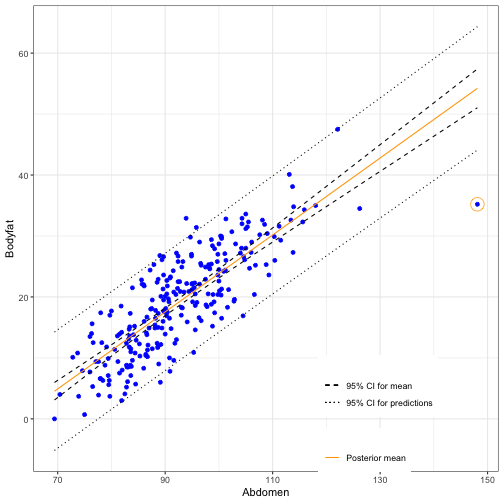

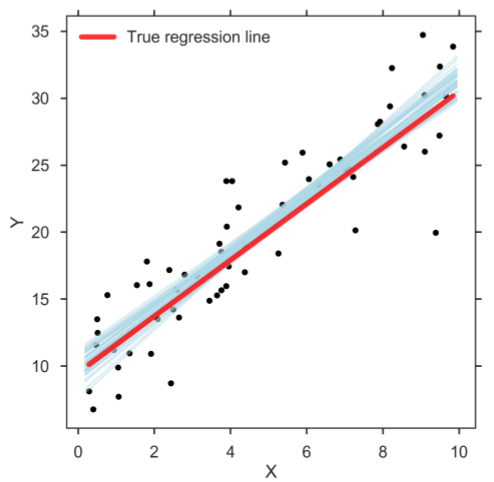

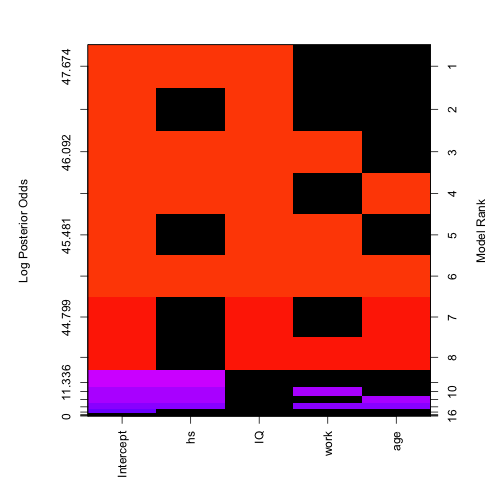

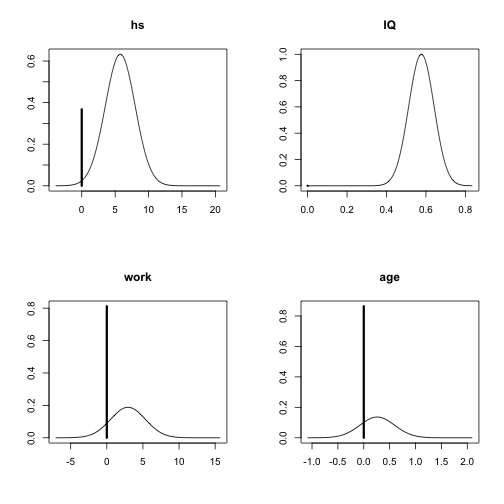

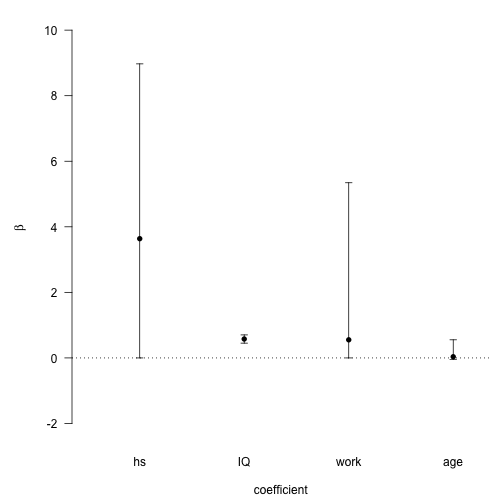

class: left, bottom, inverse, title-slide # Bayesian Statistics ## Lecture 8: Introduction to Bayesian Regression ### Yanfei Kang ### 2019/10/25 (updated: 2019-11-25) --- # What we have learnt - Bayesian basics. - Introduction to Bayesian computing. - Modern Bayesian computing algorithms. - We will see how to apply these techniques to modeling. - Let us first recall classical v.s. Bayesian. --- class: inverse, center, middle # A recap of Bayesian inference --- # Inference - Consider a simple problem of inference. - Suppose I am interested in the proportion of students in this class who are born in Beijing. This will be denoted as `\(\theta\)`. - In particular, I am interested in whether half of the class is born in Beijing ( `\(\theta=0.5\)`) or whether it is less than half ( `\(\theta<0.5\)`). - I am unable to ask everybody in the class if they were born in Beijing, I can only take a sample. --- # Classical Framework - In the **classical framework** `\(\theta\)` is NOT a random variable. It is a fixed number that is unknown. - Using the sample, an estimate `\(\hat{\theta}\)` can be obtained. - A `\(95\%\)` confidence interval around `\(\hat{\theta}\)` can be constructed. - The null hypothesis `\(\theta=0.5\)` can be tested against the alternative. --- # Intepretation in Classical Framework - Suppose the `\(95\%\)` confidence interval is (0.3-0.45). How is this interpreted? - Correct (classical) interpretation: - If an infinite number of samples is taken, `\(95\%\)` of the confidence intervals constructed in this way will contain the true value `\(\theta\)`. - Incorrect (classical) interpretation: - There is a `\(95\%\)` probability that `\(\theta\)` is in the interval 0.35-0.45. - Reason: `\(\theta\)` is not a random variable. --- # Intepretation in Classical Framework - Suppose `\(\hat{\theta}=0.4\)` and the Null is rejected at the `\(5\%\)` level of significance. - Correct (classical) interpretation: - If the null were true than the probability of observing `\(\hat{\theta}\leq 0.4\)` is less than the level of significance ( `\(5\%\)`). Therefore the null is rejected and we conclude `\(\hat{\theta}\leq 0.5\)`. - Incorrect (classical) interpretation: - The probability that `\(\theta\leq 0.5\)` is `\(95\%\)`. - Reason: `\(\theta\)` is not a random variable. --- # Bayesian Framework - There is another way to do statistical inference known as the **Bayesian Framework**. - It relies on a very different understanding of probability. - In the Bayesian framework probability distributions represent uncertainty about quantities that are unknown. - In our example, under the Bayesian framework `\(\theta\)` is a random variable. --- # Why Bayes? - Let `\({y}=(y_1,y_2,\ldots,y_n)'\)` be the data in the sample, and `\(\theta\)` be the unknown parameter of interest. - Inferences about `\(\theta\)` are based on the distribution `\(p(\theta|{y})\)`. This can be found using Bayes' Rule `$$p(\theta|{y})=\frac{p({y}|\theta)p(\theta)}{p({y})}$$` - Consider each term in the equation. --- # ### Question 1: what kind of inferences have we made using Bayesian framework? Examples? ### Question 2: what techniques have we learnt? Why do we need sampling algorithms? Examples? --- class: inverse, center, middle # The Bayesian linear regression model --- # Linear regression - Recall the linear regression model `$$y_i = \beta_0 + \beta_1 x_1 + ... + \beta_p x_p + \epsilon_i,$$` where `\(\epsilon_i \sim N(0, \sigma^2).\)` - We are intertested in the joint distribution of `\(\beta_0,\beta_1,...\beta_p\)` and `\(\sigma^2\)`. - You know how to get that by OLS under the Gaussian assumption. We have `\(\beta|\sigma^2 \sim N\left[(x'x)^{-1}x'y,(x'x)^{-1}\sigma^2\right]\)` and `\(\sigma^2 \sim \chi^2(n-p)\)`. --- # The Bayesian approach - We know by the Bayes' rule `$$\begin{aligned} p\left(\beta, \sigma^{2} | y, x\right) & \propto p\left(y | \beta, \sigma^{2}, x\right) p\left(\beta, \sigma^{2}\right) \\ &=p\left(y | \beta, \sigma^{2}, x\right) p\left(\beta | \sigma^{2}\right) p\left(\sigma^{2}\right) \end{aligned}$$` where `\(p(y|\beta,\sigma^2,x)\)` is the **likelihood** for the model and `\(p(\beta,\sigma^2) = p(\beta|\sigma^2)p(\sigma^2)\)` is the **prior** information of the parameters and `\(p(\beta,\sigma^2|y,x)\)` is called the **posterior**. - **The Bayesian way**: Let's just draw random samples from `\(p(\beta,\sigma^2|y,x)\)`. --- # Sampling the Posterior - Write down the likelihood function. - Specify the prior. - Write down the posterior. - Use Gibbs to draw. Set a initial value for `\(\beta^{(0)}\)` and `\(\sigma^{2(0)}\)`. - Draw a random vector `\(\beta^{(1)}\)` from `\(p(\beta^{(1)} | \sigma^{2(0)},y,x)\)` - Draw a random number `\(\sigma^{2(1)}\)` from `\(p(\sigma^{2(1)}| \beta^{(1)} ,y,x)\)` - Draw a random vector `\(\beta^{(2)}\)` from `\(p(\beta^{(2)} | \sigma^{2(1)},y,x)\)` - Draw a random number `\(\sigma^{2(2)}\)` from `\(p(\sigma^{2(2)}| \beta^{(2)} ,y,x)\)` - Draw a random vector `\(\beta^{(3)}\)` from `\(p(\beta^{(3)} | \sigma^{2(2)},y,x)\)` - Draw a random number `\(\sigma^{(3)}\)` from `\(p(\sigma^{2(3)}| \beta^{(3)} ,y,x)\)` - ... - ... - Summarize `\(\beta^{(1)},\beta^{(2)},...,\beta^{(n)}\)` - Summarize `\(\sigma^{(1)},\sigma^{(2)},...,\sigma^{(n)}\)` --- # Lab Work on random walk Metroplis within Gibbs for multiple linear regression. - Prior for `\(\beta\)`: `\(\beta \sim N(0, I)\)`. - Prior for `\(\sigma^2\)`: `\(\sigma^2 \sim \chi^2(10)\)`. - Write down the likelihood. - Write down the joint posterior. - Go to Gibbs. - Use random walk proposal for Metropolis. - Summary your samples. --- # Lab (continued) ```r ## Generate data for linear regression n <- 1000 p <- 3 epsilon <- rnorm(n, 0, 0.1) beta <- matrix(c(-3, 1, 3, 5)) X <- cbind(1, matrix(runif(n * p), n)) y <- X %*% beta + epsilon ``` --- # Lab (continued) ```r ## Log posterior library(mvtnorm) LogPosterior <- function(beta, sigma2, y, X) { p <- length(beta) ## The log likelihood function LogLikelihood <- sum(dnorm(x = y, mean = X %*% beta, sd = sqrt(sigma2), log = TRUE)) ## The priors LogPrior4beta <- dmvnorm(x = t(beta), mean = matrix(0, 1, p), sigma = diag(p), log = TRUE) LogPrior4sigma2 <- dchisq(x = sigma2, df = 10, log = TRUE) LogPrior <- LogPrior4beta + LogPrior4sigma2 ## The log posterior LogPosterior <- LogLikelihood + LogPrior return(LogPosterior) } ``` --- class: inverse, center, middle # Connections between Bayesian and OLS -- ### Let's come back to the simple regression model --- # Frequentist Ordinary Least Square (OLS) Simple Linear Regression - Obtaining accurate measurements of body fat is expensive and not easy to be done. - Instead, predictive models that predict the percentage of body fat which use readily available measurements such as abdominal circumference are easy to use and inexpensive. - Let's use a simple linear regression to predict body fat using abdominal circumference. - The data set `bodyfat` can be found from the library `BAS`. --- # Frequentist OLS ```r library(BAS) data(bodyfat) summary(bodyfat) # Frequentist OLS linear regression bodyfat.lm = lm(Bodyfat ~ Abdomen, data = bodyfat) summary(bodyfat.lm) ``` --- # The model This regression model can be formulated as `$$y_{i}=\alpha+\beta x_{i}+\epsilon_{i}, \quad i=1, \cdots, 252.$$` We have assumptions for the error `$$\epsilon_{i} \stackrel{\text { iid }}{\sim} \text { Normal }\left(0, \sigma^{2}\right).$$` --- # `lm` .pull-left[ ```r # Extract coefficients beta = coef(bodyfat.lm) # Visualize regression line on the scatter plot library(ggplot2) ggplot(data = bodyfat, aes(x = Abdomen, y = Bodyfat)) + geom_point(color = "blue") + geom_abline(intercept = beta[1], slope = beta[2], size = 1) + xlab("abdomen circumference (cm)") ``` ] .pull-right[ <!-- --> ] --- # OLS - We usually use the residuals for diagnostics and estimating the variance of the error term `\(\sigma^2\)`: `$$\hat{\sigma}^{2}=\frac{1}{n-2} \sum_{i}^{n}\left(y_{i}-\hat{y}_{i}\right)^{2}=\frac{1}{n-2} \sum_{i}^{n} \hat{\epsilon}_{i}^{2} = \frac{SSE}{n-2}.$$` - We have the estimates `$$\hat{\beta}=\frac{\sum_{i}\left(x_{i}-\bar{x}\right)\left(y_{i}-\bar{y}\right)}{\sum_{i}\left(x_{i}-\bar{x}\right)^{2}}=\frac{S_{x y}}{S_{x x}}, \quad \quad \hat{\alpha}=\bar{y}-\hat{\beta} \bar{x}=\bar{y}-\frac{S_{x y}}{S_{x x}} \bar{x}.$$` --- # OLS - The standard errors are given as `$$\begin{array}{l}{\operatorname{se}_{\alpha}=\sqrt{\frac{\operatorname{SSE}}{n-2}\left(\frac{1}{n}+\frac{\bar{x}^{2}}{\mathrm{S}_{x x}}\right)}=\hat{\sigma} \sqrt{\frac{1}{n}+\frac{\bar{x}^{2}}{\mathrm{S}_{x x}}}} \\ {\operatorname{se}_{\beta}=\sqrt{\frac{\mathrm{SSE}}{n-2} \frac{1}{\mathrm{S}_{x x}}}=\frac{\hat{\sigma}}{\sqrt{\mathrm{S}_{x x}}}}\end{array}$$` - We can then construct confidence intervals. --- # Bayesian way - Bear in mind our model This regression model can be formulated as `$$y_{i}=\alpha+\beta x_{i}+\epsilon_{i}, \epsilon_{i} \stackrel{\text { iid }}{\sim} \text { Normal }\left(0, \sigma^{2}\right).$$` - The Bayesian approach returns a whole distribution of so-called credible values for each parameter. - Credibility is a term used to express our belief in certain parameter values. - The benefit of working with distributions is that they provide a natural way to reflect uncertainty of estimates. --- # Bayesian way - Can you write down the likelihood? - We now use non-informative prior: `$$p\left(\alpha, \beta, \sigma^{2}\right) \propto \frac{1}{\sigma^{2}}.$$` --- # The joint posterior `$$\begin{aligned} p^{*}\left(\alpha, \beta, \sigma^{2} | y_{1}, \cdots, y_{n}\right) & \propto\left[\prod_{i}^{n} p\left(y_{i} | x_{i}, \alpha, \beta, \sigma^{2}\right)\right] p\left(\alpha, \beta, \sigma^{2}\right) \\ & \propto\left[\left(\frac{1}{\left(\sigma^{2}\right)^{1 / 2}} \exp \left(-\frac{\left(y_{1}-\left(\alpha+\beta x_{1}\right)\right)^{2}}{2 \sigma^{2}}\right)\right) \times \cdots\right.\\ &\left.\times\left(\frac{1}{\left(\sigma^{2}\right)^{1 / 2}} \exp \left(-\frac{\left(y_{n}-\left(\alpha+\beta x_{n}\right)\right)^{2}}{2 \sigma^{2}}\right)\right)^{2}\right] \times\left(\frac{1}{\sigma^{2}}\right) \\ & \propto \frac{1}{\left(\sigma^{2}\right)^{(n+2) / 2}} \exp \left(-\frac{\sum_{i}\left(y_{i}-\alpha-\beta x_{i}\right)^{2}}{2 \sigma^{2}}\right) \end{aligned}.$$` --- # The marginal posterior `$$\beta | y_{1}, \cdots, y_{n} \sim \mathrm{t}\left(n-2, \hat{\beta}, \frac{\hat{\sigma}^{2}}{\mathrm{S}_{x x}}\right)=\mathrm{t}\left(n-2, \hat{\beta},\left(\mathrm{se}_{\beta}\right)^{2}\right).$$` `$$\alpha | y_{1}, \cdots, y_{n} \sim \mathrm{t}\left(n-2, \hat{\alpha}, \hat{\sigma}^{2}\left(\frac{1}{n}+\frac{\bar{x}^{2}}{\mathrm{S}_{x x}}\right)\right)=\mathrm{t}\left(n-2, \hat{\alpha},\left(\mathrm{se}_{\alpha}\right)^{2}\right).$$` `$$\phi=\frac{1}{\sigma^{2}} | y_{1}, \cdots, y_{n} \sim \text { Gamma }\left(\frac{n-2}{2}, \frac{\mathrm{SSE}}{2}\right).$$` --- # The conditional posterior `$$\beta | \sigma^{2}, \text { data } \sim \text { Normal }\left(\hat{\beta}, \frac{\sigma^{2}}{\mathrm{S}_{x x}}\right).$$` `$$\alpha | \sigma^{2}, \text { data } \sim \text { Normal }\left(\hat{\alpha}, \sigma^{2}\left(\frac{1}{n}+\frac{x^{2}}{\mathrm{S}_{x x}}\right)\right).$$` --- # Credible intervals (CI) for `\(\alpha\)` and `\(\beta\)` ```r output = summary(bodyfat.lm)$coef[, 1:2] out = cbind(output, confint(bodyfat.lm)) colnames(out) = c("posterior mean", "posterior std", "2.5", "97.5") round(out, 2) ``` ``` ## posterior mean posterior std 2.5 97.5 ## (Intercept) -39.28 2.66 -44.52 -34.04 ## Abdomen 0.63 0.03 0.58 0.69 ``` --- # Credible intervals for the mean The mean at point `\(x_i\)` is `$$\mu_{Y}\left|x_{i}=E\left[Y | x_{i}\right]=\alpha+\beta x_{i}\right.$$` For the mean, we have its posterior `$$\alpha+\beta x_{i} | \text { data } \sim t\left(n-2, \hat{\alpha}+\hat{\beta} x_{i}, \mathrm{S}_{Y | X_{i}}^{2}\right),$$` where `$$\mathrm{S}_{Y | X_{i}}^{2}=\hat{\sigma}^{2}\left(\frac{1}{n}+\frac{\left(x_{i}-\bar{x}\right)^{2}}{\mathrm{S}_{x x}}\right)$$`. --- # Credible intervals for the prediction Any new predition `\(y_{n+1}\)` at a point `\(x_{n+1}\)` also follows `\(t\)`-distribution. For the prediction, we have `$$\begin{array}{l}{\qquad y_{n+1} | \text { data, } x_{n+1} \sim \mathrm{t}\left(n-2, \hat{\alpha}+\hat{\beta} x_{n+1}, \mathrm{S}_{Y | X_{n+1}}^{2}\right)} \\ \\ {\qquad \mathrm{S}_{Y | X_{n+1}}^{2}=\hat{\sigma}^{2}+\hat{\sigma}^{2}\left(\frac{1}{n}+\frac{\left(x_{n+1}-\bar{x}\right)^{2}}{\mathrm{S}_{x x}}\right)=\hat{\sigma}^{2}\left(1+\frac{1}{n}+\frac{\left(x_{n+1}-\bar{x}\right)^{2}}{\mathrm{S}_{x x}}\right)}\end{array}$$` **The variance for predicting a new observation `\(y_{n+1}\)` has an extra `\(\hat{\sigma}^{2}\)` which comes from the uncertainty of a new observation about the mean `\(\mu_Y\)` estimated by the regression line.** --- # Credible intervals .pull-left[ ```r library(ggplot2) # Construct current prediction alpha = bodyfat.lm$coefficients[1] beta = bodyfat.lm$coefficients[2] new_x = seq(min(bodyfat$Abdomen), max(bodyfat$Abdomen), length.out = 100) y_hat = alpha + beta * new_x # Get lower and upper bounds for mean ymean = data.frame(predict(bodyfat.lm, newdata = data.frame(Abdomen = new_x), interval = "confidence", level = 0.95)) # Get lower and upper bounds for prediction ypred = data.frame(predict(bodyfat.lm, newdata = data.frame(Abdomen = new_x), interval = "prediction", level = 0.95)) output = data.frame(x = new_x, y_hat = y_hat, ymean_lwr = ymean$lwr, ymean_upr = ymean$upr, ypred_lwr = ypred$lwr, ypred_upr = ypred$upr) # Extract potential outlier data point outlier = data.frame(x = bodyfat$Abdomen[39], y = bodyfat$Bodyfat[39]) # Scatter plot of original plot1 = ggplot(data = bodyfat, aes(x = Abdomen, y = Bodyfat)) + geom_point(color = "blue") # Add bounds of mean and prediction plot2 = plot1 + geom_line(data = output, aes(x = new_x, y = y_hat, color = "first"), lty = 1) + geom_line(data = output, aes(x = new_x, y = ymean_lwr, lty = "second")) + geom_line(data = output, aes(x = new_x, y = ymean_upr, lty = "second")) + geom_line(data = output, aes(x = new_x, y = ypred_upr, lty = "third")) + geom_line(data = output, aes(x = new_x, y = ypred_lwr, lty = "third")) + scale_colour_manual(values = c("orange"), labels = "Posterior mean", name = "") + scale_linetype_manual(values = c(2, 3), labels = c("95% CI for mean", "95% CI for predictions") , name = "") + theme_bw() + theme(legend.position = c(1, 0), legend.justification = c(1.5, 0)) # Identify potential outlier plot2 + geom_point(data = outlier, aes(x = x, y = y), color = "orange", pch = 1, cex = 6) ``` ] .pull-right[ <!-- --> ] --- # Interpretation of CI ```r pred.39 = predict(bodyfat.lm, newdata = bodyfat[39, ], interval = "prediction", level = 0.95) out = cbind(bodyfat[39,]$Abdomen, pred.39) colnames(out) = c("abdomen", "prediction", "lower", "upper") out ``` ``` ## abdomen prediction lower upper ## 39 148.1 54.21599 44.0967 64.33528 ``` --- # Lab .pull-left[ - Use Bayesian framework to work out the simple linear regression. - Bear in mind that in Bayesian framework, you actually get a distribution of credible regression lines (see the right figure), representing the uncertainty in the parameter estimates. - (Optional) Calculate and plot the credible intervals for the mean and the prediction (see slide 33). ] .pull-right[  ] --- class: inverse, center, middle # Bayesian Model Choice --- # Bayesian Information Criterion (BIC) We will see Bayesian model selections using the Bayesian information criterion, or BIC: `$$\mathrm{BIC}=-2 \ln (\text { likelihood })+(p+1) \ln (n),$$` where `\(n\)` is the number of observations, i is the number of predictors. Remind you that in simple lienar regresssion: `$$\text { likelihood }=p\left(y_{i} | \alpha, \beta, \sigma^{2}\right)=\mathcal{L}\left(\alpha, \beta, \sigma^{2}\right)$$` --- # BIC More generally, `$$\text { likelihood }=p(\text { data } | \boldsymbol{\theta}, M)=\mathcal{L}(\boldsymbol{\theta}, M).$$` In the definition of BIC, the likelihood is `$$\text { likelihood }=p(\text { data } | \hat{\boldsymbol{\theta}}, M)=\mathcal{L}(\hat{\boldsymbol{\theta}}, M).$$` --- # BIC and `\(R^2\)` If we connect BIC and `\(R^2\)`, we have `$$\mathrm{BIC}=n \ln \left(1-R^{2}\right)+(p+1) \ln (n)+\text { constant }.$$` - Increasing `\(p\)`, will result in larger `\(R^2\)`. - Larger `\(R^2\)` means better goodness of fit, large `\(p\)` leads to overfitting. - The second term is to penalize models with too many predictors. - Trade off between the goodness of fit given by the first term and the model complexity represented by the second term. --- # Backward Elimination with BIC We will use the kid’s cognitive score data set `cognitive`. ```r # Load the library in order to read in data from website library(foreign) # Read in cognitive score data set and process data tranformations cognitive = read.dta("http://www.stat.columbia.edu/~gelman/arm/examples/child.iq/kidiq.dta") cognitive$mom_work = as.numeric(cognitive$mom_work > 1) cognitive$mom_hs = as.numeric(cognitive$mom_hs > 0) colnames(cognitive) = c("kid_score", "hs","IQ", "work", "age") ``` --- # Backward Elimination with BIC ```r n = nrow(cognitive) cog.lm = lm(kid_score ~ ., data=cognitive) cog.step = stats::step(cog.lm, k=log(n)) ``` Your findings? --- # Bayesian Model Uncertainty - We discussed how to use BIC to pick the best model. - In reality, we may often have several models with similar BIC. - If we only pick the one with the lowest BIC, we may ignore the presence of other models that are equally good or can provide useful information. - The credible intervals of coefficients may be narrower since the uncertainty is being ignored when we consider only one model. - Narrower intervals are not always better if they miss the true values of the parameters. - To account for the uncertainty, getting the posterior probability of all possible models is necessary. --- # Model Uncertainty - To represent model uncertainty, we need to construct a probability distribution over all possible models where the each probability provides measure of how likely the model is to happen. - Suppose we have a multiple linear regression `$$y_{i}=\beta_{0}+\beta_{1}\left(x_{1, i}-\bar{x}_{1}\right)+\beta_{2}\left(x_{2, i}-\bar{x}_{2}\right)+\cdots+\beta_{p}\left(x_{p, i}-\bar{x}_{p}\right)+\epsilon_{i}, \quad 1 \leq i \leq n,$$` how many models in total? --- # Model uncertainty - Denote each model as `\(M_m, m = 1, 2, \cdots, 2^p\)`. - We want to calculate `\(p\left(M_{m} | \text { data }\right)\)`: `$$p\left(M_{m} | \text { data }\right)=\frac{\text { marginal likelihood of } M_{m} \times p\left(M_{m}\right)}{\sum_{j=1}^{2^p} \text { marginal likelihood of } M_{j} \times p\left(M_{j}\right)}=\frac{p\left(\text { data } | M_{m}\right) p\left(M_{m}\right)}{\sum_{j=1}^{2^p} p\left(\operatorname{data} | M_{m}\right) p\left(M_{m}\right)}.$$` - Bayes factor `$$B F\left[M_{1}: M_{2}\right]=\frac{p\left(\text { data } | M_{1}\right)}{p\left(\text { data } | M_{2}\right)}.$$` --- # Calculating Posterior Probability in R ```r library(BAS) # Use `bas.lm` for regression cog_bas = bas.lm(kid_score ~ hs + IQ + work + age, data = cognitive) ``` --- # Visualisation .pull-left[ ```r image(cog_bas) ``` ] .pull-right[ <!-- --> ] --- # Calculating Posterior Probability in R ```r round(summary(cog_bas), 3) ``` ``` ## P(B != 0 | Y) model 1 model 2 model 3 model 4 model 5 ## Intercept 1.000 1.000 1.000 1.000 1.000 1.000 ## hs 0.632 1.000 0.000 1.000 1.000 0.000 ## IQ 1.000 1.000 1.000 1.000 1.000 1.000 ## work 0.188 0.000 0.000 1.000 0.000 1.000 ## age 0.136 0.000 0.000 0.000 1.000 0.000 ## BF NA 1.000 0.429 0.137 0.095 0.112 ## PostProbs NA 0.440 0.284 0.091 0.063 0.049 ## R2 NA 0.214 0.201 0.216 0.215 0.206 ## dim NA 3.000 2.000 4.000 4.000 3.000 ## logmarg NA 45.193 44.347 43.206 42.842 43.000 ``` --- # Calculating Posterior Probability in R ```r print(cog_bas) ``` ``` ## ## Call: ## bas.lm(formula = kid_score ~ hs + IQ + work + age, data = cognitive) ## ## ## Marginal Posterior Inclusion Probabilities: ## Intercept hs IQ work age ## 1.0000 0.6324 1.0000 0.1883 0.1363 ``` This is obtained by summing the posterior model probabilities across models for each predictor: `$$P(\beta \neq 0 | D)=\sum_{k \in\{i: \beta \neq 0 \text{ in } M_i\}} P\left(M_{k} | D\right)$$` --- # Bayesian model averaging - Once we have obtained the posterior probability of each model, we can make inference and obtain weighted averages of quantities of interest using these probabilities as weights. - Models with higher posterior probabilities receive higher weights, while models with lower posterior probabilities receive lower weights. - This gives the name **Bayesian Model Averaging** (BMA). - e.g., the probability of the next prediction `\(\hat{Y}^*\)` after seeing the data can be calculated as a “weighted average” of the prediction of next observation `\(\hat{Y_j}^*\)` with the posterior probability of `\(M_j\)` being the weight: `$$\hat{Y}^{*}=\sum_{j=1}^{2^{p}} \hat{Y}_{j}^{*} p\left(M_{j} | \text { data }\right).$$` --- # BMA - More generally, we can use this weighted average formula to obtain the value of a quantity of interest `\(\Delta\)`, `$$p(\Delta | \text { data })=\sum_{j=1}^{2^{p}} p\left(\Delta | M_{j}, \text { data }\right) p\left(M_{j} | \text { data }\right).$$` - We then have `$$E[\Delta | \text { data }]=\sum_{j=1}^{2^p} E\left[\Delta | M_{j}, \operatorname{data}\right] p\left(M_{j} | \text { data }\right).$$` --- # Coefficient Summary under BMA ```r coef(cog_bas) ``` ``` ## ## Marginal Posterior Summaries of Coefficients: ## ## Using BMA ## ## Based on the top 16 models ## post mean post SD post p(B != 0) ## Intercept 86.79724 0.87276 1.00000 ## hs 3.63750 3.29677 0.63239 ## IQ 0.57679 0.06365 1.00000 ## work 0.55219 1.54807 0.18831 ## age 0.03551 0.15273 0.13633 ``` --- # Posterior distributions of coefficients .pull-left[ ```r par(mfrow = c(2, 2)) plot(coef(cog_bas), subset = c(2:5), ask = F) ``` - The vertical bar represents the posterior probability that the coefficient is 0. - The curve represents the density of plausible values from all the models where the coefficient is non-zero. ] .pull-right[ <!-- --> ] --- # Posterior distributions of coefficients .pull-left[ ```r plot(confint(coef(cog_bas), parm = 2:5)) ``` This uses Monte Carlo sampling to draw from the mixture model over coefficient where models are sampled based on their posterior probabilities. ] .pull-right[ <!-- --> ``` ## NULL ``` ] --- # Prediction ```r # Predict with model averaging predict(cog_bas, estimator = 'BMA') # Predict with model selection predict(cog_bas, estimator = 'HPM') # Predict with BMA plot(confint(predict(cog_bas, estimator = 'BMA', se.fit = TRUE))) ``` --- # Conclusion - Bayesian linear regression. - Connections between Bayesian regression and OLS. - Bayesian model selection and model averaging.