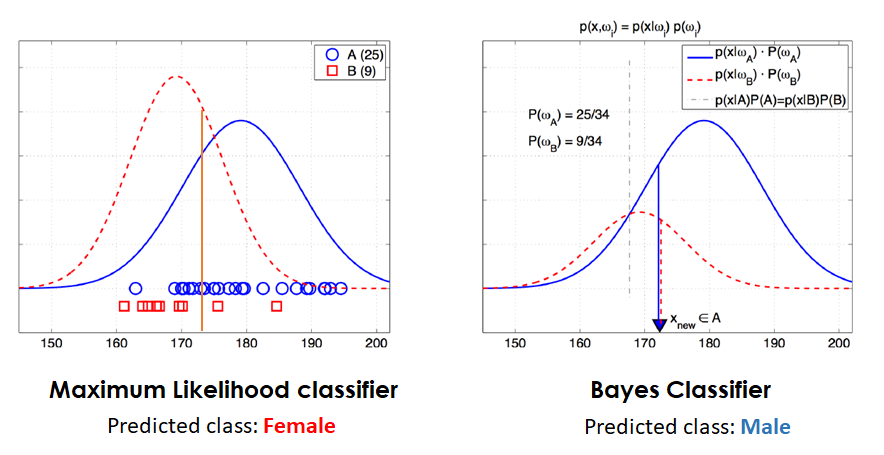

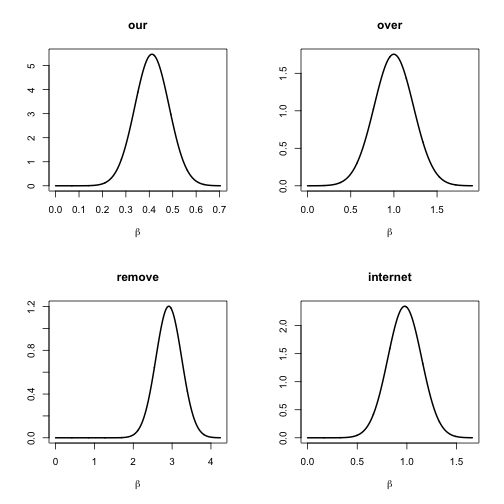

class: left, bottom, inverse, title-slide # Bayesian Statistics ## Lecture 9: Applications of Bayesian Methods ### Yanfei Kang ### 2019/10/25 (updated: 2019-12-02) --- # Applications of Bayesian Methods - Classification - Forecasting --- class: inverse, center, middle # Classification --- # Classification - We have seen how Bayes’ theorem can be used for regression, by estimating the parameters of a linear model, and Bayesian model averaging. - Now let us have some understandings of Bayesian classification. --- # Bayesian Classification - Imagine we have a classification problem with `\(C\)` different classes. - The thing we are after here is the class probability for each class `\(c\)`. - Like in the previous regression case, we also differentiate between prior and posterior probabilities - Now we have prior class probabilities `\(p(c)\)` and posterior class probabilities, after using data or observations `\(p(c|x)\)`: `$$p(c|x) = \frac{p(x|c)p(c)}{p(x)}.$$` --- # Example - Imagine we have measured the height of 34 individuals: 25 males (blue) and 9 females (red). - We get a new height observation of 172cm which we want to classify as male or female. - The following figure represents the predictions obtained using a Maximum likelihood classifier and a Bayesian classifier. --- # Example  --- # Example - We have used the number of samples in the training data as the prior knowledge for our class distributions, but if for example we were doing this same differentiation between height and gender for a specific country, and we knew the woman there are specially tall, and also knew the mean height of the men, we could have used this information to build our prior class distributions. - As we can see from the example, using these prior knowledge leads to different results than not using them. Assuming this previous knowledge is of high quality, these predictions should be more accurate than similar trials that don’t incorporate this information. --- # Naive Bayes - By Bayes's theorem, `\(p(c|x) \propto p(x|c)p(c).\)` - `\(p(x|c)\)` can be `\(N(\theta_c, \Sigma_c)\)`. It can be very high-dimensional and hard to estimate. - Even with binary features, the outcome space of `\(p(x|c)\)` can be huge. - **Naive Bayes**: features are assumed independent: `$$p(x|c) \prod_{i}^{n}p(x_i|c).$$` --- # Classification with logistic regression - Response is assumed to be binary. - Example: Spam/Ham. Covariates: symbols, etc. - Logistic regression: `$$p(y_i = 1|x_i) = \frac{\exp(x_i^{'}\beta)}{1 + \exp(x_i^{'}\beta)}.$$` - Likelihood: `$$p(y | X, \beta) = \prod_i^n \frac{(\exp(x_i^{'}\beta))^{y_i}}{1 + \exp(x_i^{'}\beta)}.$$` - Prior: `\(\beta \sim N(0, \tau^2I)\)`. --- # Normal approximation of posterior - Remember MAP? - How to realize normal approximation in R? --- # Example: spam data ```r # Loading data from file Data<-read.table("SpamReduced.dat",header=TRUE) # Spam data from Hastie et al. y <- as.vector(Data[,1]) # Data from the read.table function is a data frame. Let's convert y and X to vector and matrix. X <- as.matrix(Data[,2:17]) covNames <- names(Data)[2:length(names(Data))] chooseCov <- c(1:16) # Here we choose which covariates to include in the model X <- X[,chooseCov] # Here we pick out the chosen covariates. covNames <- covNames[chooseCov] nPara <- dim(X)[2] ``` --- # Example: prior and posterior ```r library("mvtnorm") # Setting up the prior tau <- 10000 mu <- as.vector(rep(0, nPara)) # Prior mean vector Sigma <- tau^2 * diag(nPara) LogPostLogistic <- function(betaVect, y, X, mu, Sigma) { nPara <- length(betaVect) linPred <- X %*% betaVect # evaluating the log-likelihood logLik <- sum(linPred * y - log(1 + exp(linPred))) if (abs(logLik) == Inf) logLik = -20000 # Likelihood is not finite, stear the optimizer away from here! # evaluating the prior logPrior <- dmvnorm(betaVect, matrix(0, nPara, 1), Sigma, log = TRUE) # add the log prior and log-likelihood together to get log posterior return(logLik + logPrior) } ``` --- # Normal approximation ```r # Different starting values. Ideally, any random starting value gives you the same optimum (i.e. optimum is unique) initVal <- as.vector(rep(0,dim(X)[2])) # Or a random starting vector: as.vector(rnorm(dim(X)[2])) # Set as OLS estimate: as.vector(solve(crossprod(X,X))%*%t(X)%*%y) # Initial values by OLS OptimResults<-optim(initVal,LogPostLogistic,gr=NULL,y,X,mu,Sigma,method=c("BFGS"),control=list(fnscale=-1),hessian=TRUE) # Printing the results to the screen postMode <- OptimResults$par postCov <- -solve(OptimResults$hessian) # Posterior covariance matrix is -inv(Hessian) names(postMode) <- covNames # Naming the coefficient by covariates approxPostStd <- sqrt(diag(postCov)) # Computing approximate standard deviations. names(approxPostStd) <- covNames # Naming the coefficient by covariates ``` --- # Normal approximation ```r print('The posterior mode is:') ``` ``` ## [1] "The posterior mode is:" ``` ```r print(postMode) ``` ``` ## our over remove internet free ## 0.4114551342 0.9986162554 2.9146026434 0.9783776542 1.3102895310 ## hpl X. X..1 CapRunMax CapRunTotal ## -1.2004161084 0.5579120757 8.3543566005 0.0118452359 0.0005533588 ## const hp george X1999 re ## -1.3998651455 -2.0937445014 -6.1211174276 -0.4485509937 -0.8528582370 ## edu ## -1.8631082896 ``` --- # Normal approximation ```r print('The approximate posterior standard deviation is:') ``` ``` ## [1] "The approximate posterior standard deviation is:" ``` ```r print(approxPostStd) ``` ``` ## our over remove internet free ## 0.0729231509 0.2274514097 0.3316596541 0.1701291785 0.1428728759 ## hpl X. X..1 CapRunMax CapRunTotal ## 0.3864394114 0.0943121714 0.6891839053 0.0017611534 0.0001418734 ## const hp george X1999 re ## 0.0844039311 0.3029177356 1.1870255344 0.1887243057 0.1472771597 ## edu ## 0.2752313274 ``` --- # Normal approximation .pull-left[ ```r par(mfrow = c(2,2)) for (k in 1:4){ betaGrid <- seq(0, postMode[k] + 4*approxPostStd[k], length = 1000) plot(betaGrid, dnorm(x = betaGrid, mean = postMode[k], sd = approxPostStd[k]), type = "l", lwd = 2, main = names(postMode)[k], ylab = '', xlab = expression(beta)) } ``` ] .pull-right[ <!-- --> ] --- class: inverse, center, middle # Forecasting --- # Time Series Analysis vs Forecasting - They are different topics. - Time series analysis is a description of what has already happened. - Forecasting is a view of an uncertain future. - One can analyse time series data without regard to forecasting, just as one can make a forecast without regard to time series (or any other) analysis. --- # The Bayesian Approach to Forecasting - Model building is an art - there is no single true model. - A forecast is a statement about an uncertain future. - It is a belief, instead of a statement of fact. - Whenever we make a forecast we actually make a statement of probability or distribution that quantifies the nature of our uncertainty. - Forecasts are therefore conditional probability statements, the conditioning being on the existing state of knowledge. --- # Bayesian forecasting - The Bayesian paradigm: quantifies uncertainty about: **unknown|known** using a **distribution**. - In forecasting the unknown quantity of interest is `\(y_{T+1}\)`: `$$\begin{aligned} p\left(y_{T+1} | \mathbf{y}\right) &=\int_{\theta} p\left(y_{T+1}, \boldsymbol{\theta} | \mathbf{y}\right) d \boldsymbol{\theta} \\ &=\int_{\theta} p\left(y_{T+1} | \boldsymbol{\theta}, \mathbf{y}\right) p(\boldsymbol{\theta} | \mathbf{y}) d \boldsymbol{\theta} \\ &=E_{\theta | \mathbf{y}}\left[p\left(y_{T+1} | \boldsymbol{\theta}, \mathbf{y}\right)\right] \end{aligned}.$$` - Bayesian forecasting is to **evaluate the posterior expectation**. --- # Bayesian forecasting Let us consider autoregressive (AR) process: `$$y_t = \mu + \phi_1(y_{t-1} - \mu) + \cdots + \phi_p(y_{t-p} - \mu) + \epsilon_t,~\epsilon_t\sim N(0, \sigma^2).$$` The simulation algorithm is to **repeat `\(N\)` times**: 1. Generate a posterior draw of `\(\theta^{(1)} = (\phi_1^{(1)}, \cdots, \phi_p^{(1)}, \mu^{(1)}, \sigma^{(1)})\)`. 2. Generate a predictive draw of future time series by: - `\(\tilde{y}_{T+1} \sim p(y_{T+1}| y_T, \cdots, y_{T-p}, \theta^{(1)}).\)` - `\(\tilde{y}_{T+2} \sim p(y_{T+2}| \tilde{y}_{T+1} y_T, \cdots, y_{T-p}, \theta^{(1)}).\)` - ... --- # Bayesian forecasting Given an ability to simulate `\(M\)` draws from `\(p(\theta|y)\)`, `\(p(y_{T+1}|y)\)` can be estimated as 1. either: `$$\hat{p}\left(y_{T+1} | \mathbf{y}\right)=\frac{1}{M} \sum_{i=1}^{M} p\left(y_{T+1} | \boldsymbol{\theta}^{(i)}, \mathbf{y}\right)$$` 2. or: `\(\hat{p}(y_{T+1}|y)\)` constructed from draws of `\(y_{T+1}^{(i)}\)` simulated from `\(p(y_{T+1} | \boldsymbol{\theta}^{(i)}, \mathbf{y})\)`. --- # Catering model uncertainty Uncertainty about the model itself catered for by: 1. Specifying a set of models: `\(M_1, M_2, \cdots,M_k\)` in which the true model is believed to lie. 2. Using Bayes Theorem to define: `$$p\left(M_{k} | \mathbf{y}\right)=\frac{p\left(\mathbf{y} | M_{k}\right) p\left(M_{k}\right)}{p(\mathbf{y})}, k=1,2, \cdots, K.$$` 3. Model averaging: `$$p_{BMA}\left(y_{T+1} | \mathbf{y}\right)=\frac{1}{K} \sum_{k=1}^{K} p\left(y_{T+1} | M_{k}, \mathbf{y}\right) p\left(M_{k} | \mathbf{y}\right).$$` --- # Other Application of Bayesian Methods - Bayesian optimization - Topic modelling - Mixtures and clustering - Bayesian networks - Missing data - etc.